Voice AI agents are changing business communication forever. They answer calls, book appointments, and solve customer problems without any human help. These smart systems understand what people say and respond naturally in real-time.

Voice AI agents work by listening to speech, converting it to text, understanding the meaning using AI, generating smart responses, and speaking back in human-like voices, all happening in under one second.

Here’s why businesses love them:

- Handle unlimited calls simultaneously without hiring more staff

- Work 24/7 without breaks including weekends and holidays

- Reduce operational costs by 60% compared to traditional call centers

- Respond in under 800 milliseconds creating natural conversations

- Integrate with your existing systems like CRMs and scheduling tools

What Is a Voice AI Agent?

A voice AI agent is software that talks to customers over the phone using artificial intelligence. Unlike old phone menus where you press buttons, these agents understand normal conversation and respond like humans would.

Think of them as digital employees who never get tired, never call in sick, and can handle thousands of conversations at once.

Voice AI Agents vs Voice Bots vs Virtual Assistants

People often confuse these terms, but they’re different tools:

Voice AI Agents focus on business phone calls. They handle customer support, schedule appointments, qualify sales leads, and process orders. They’re built for professional conversations that get results.

Voice Bots are simpler systems that follow scripts. They have limited responses and break when people ask unexpected questions. They’re the frustrating “press 1 for sales” systems you want to escape from.

Virtual Assistants like Alexa or Google Assistant help with personal tasks. They set timers, play music, answer general questions, and control smart homes. They’re great for consumers but not designed for business operations.

You’ve probably talked to voice AI already. When you call your bank, check appointment availability, or track a package by phone, voice AI often handles the conversation.

How Do Voice AI Agents Work? (Step-by-Step Process)

Understanding how these systems work shows you why they’re so powerful. The magic happens in eight connected steps that run in real-time.

Step 1: Wake Word & Call Trigger Detection

Voice AI agents start listening in two ways:

Wake words are trigger phrases like “Hello Assistant” that activate the system. These work for devices that listen constantly but only process after hearing the specific phrase.

Event-based triggers start when something happens—an incoming call, a scheduled outbound call, or someone clicking a button. Most business systems use event triggers because they’re more private and efficient than always-listening systems.

Step 2: Voice Activity Detection (VAD)

Once active, the system needs to know when you’re talking. Voice Activity Detection separates your speech from background noise, silence, and other sounds.

This step is critical for two reasons. It prevents wasting processing power on empty audio, and it knows exactly when you finish speaking so the response can start immediately.

Modern VAD works even in noisy environments like busy streets, crowded offices, or places with background music.

Step 3: Speech-to-Text (STT) Conversion

This is where your voice becomes words the computer can understand. Automatic Speech Recognition technology transcribes everything you say into text.

Real-time transcription happens while you’re speaking. The system doesn’t wait for you to finish—it processes your words instantly, creating live transcripts.

Streaming technology sends audio in tiny pieces instead of waiting for complete sentences. This cuts delay time dramatically, keeping conversations flowing naturally.

Several factors affect accuracy:

- Background noise levels

- Audio quality from your phone or microphone

- Accents and speaking styles

- Industry-specific words and technical terms

Top systems now reach 95% accuracy in clear conditions. Systems trained on medical, legal, or financial language perform even better in those fields.

Step 4: Natural Language Understanding (NLU)

Now the system has your words as text. But it needs to understand what you actually mean. That’s where Natural Language Understanding comes in.

Intent detection figures out what you want. When you say “I need to change my Tuesday appointment,” the system recognizes your intent as modifying a scheduled event.

Entity extraction pulls out important details. From “Move my 2 PM Friday appointment to Monday at 10 AM,” it extracts:

- Original day: Friday

- Original time: 2 PM

- New day: Monday

- New time: 10 AM

Context memory remembers what you talked about earlier. If you then say “Actually, make it 11 instead,” the system knows you mean 11 AM on Monday for that same appointment.

This memory makes conversations feel natural instead of robotic and repetitive.

Step 5: Large Language Model (LLM) Processing

This is the brain of the operation. Large language models like GPT-4 or Claude analyze everything and decide how to respond.

These AI models handle complex situations:

- Answering questions that need reasoning

- Managing multiple requests in one sentence

- Following up on previous conversation points

- Adapting when the conversation changes direction

When you ask “What’s my account balance and when is my next payment due?”, the LLM processes both questions, determines what information to retrieve, and creates a response that addresses everything clearly.

LLMs let voice agents handle surprises. Even when you ask something unexpected or phrase things unusually, the system adapts and responds helpfully.

Step 6: Dialogue Management

The dialogue manager controls the conversation flow. It decides what happens next at every turn based on what you say, what the business rules allow, and what makes sense conversationally.

Flow control manages conversation paths. If you verify your identity successfully, it proceeds to your request. If verification fails, it offers other authentication methods or transfers to a human.

Turn-taking manages who speaks when. Advanced systems let you interrupt (called barge-in), so you can stop the agent mid-sentence when you need to.

Error handling deals with confusion. When the system doesn’t understand, it asks clarifying questions instead of failing. If things get too complex, it smoothly transfers you to a human agent.

This component makes conversations feel genuinely interactive rather than scripted.

Step 7: Text-to-Speech (TTS) Response

Text-to-Speech converts the agent’s response into spoken words. This is where the system’s voice comes to life.

Modern TTS sounds remarkably human. Neural voice systems create natural speech with:

- Proper emphasis on important words

- Natural rhythm and pacing

- Appropriate emotional tone

- Realistic breathing and pauses

Voice customization lets businesses choose personality traits. A healthcare scheduler might sound calm and reassuring, while a sales agent could be enthusiastic and energetic.

Streaming audio generation creates sound as the response is written, not after. This eliminates waiting and makes replies feel instant.

The result? Conversations that sound and feel completely natural.

Step 8: Learning & Optimization

Voice AI agents get smarter with every conversation through machine learning.

Analytics tracking monitors performance:

- How many calls succeed

- Average call duration

- Customer satisfaction ratings

- Where people get confused

- Which phrases work best

Machine learning analyzes patterns in thousands of calls to find improvements. The system learns which wordings reduce confusion, which conversation flows convert better, and how to handle edge cases.

Models retrain on real conversation data regularly, improving accuracy with accents, industry terms, and customer preferences.

Your voice agent becomes more effective over time automatically.

Voice AI Agent Architecture Explained

Voice AI systems are built using different architectural approaches. Each has advantages depending on your needs.

Cascading (Pipeline) Architecture

This traditional approach chains specialized components: STT → NLU → LLM → TTS.

Each component does one job:

- Speech recognition converts audio to text

- Language understanding interprets meaning

- AI models generate responses

- Speech synthesis creates audio output

Benefits: Easy to troubleshoot and upgrade individual pieces. You can swap your speech engine without touching other components.

Drawbacks: Each handoff between components adds delay. These milliseconds add up, potentially making conversations feel slow.

End-to-End Architecture

Newer systems use one unified model handling everything from audio input to audio output.

Benefits: Lower latency because there are no handoffs. Better understanding of tone and emotion since the model processes raw audio.

Drawbacks: More complex to build and train. Less flexible—you can’t easily upgrade just one piece.

Hybrid Architecture (Most Popular)

Most successful systems combine both approaches. They use specialized components where they excel and integrated processing where speed matters most.

This delivers:

- Fast performance with low latency

- Flexibility to customize components

- Easier troubleshooting and updates

- Better cost efficiency

Enterprise businesses prefer hybrid systems because they balance control with cutting-edge AI performance.

Key Technologies Powering Voice AI Agents

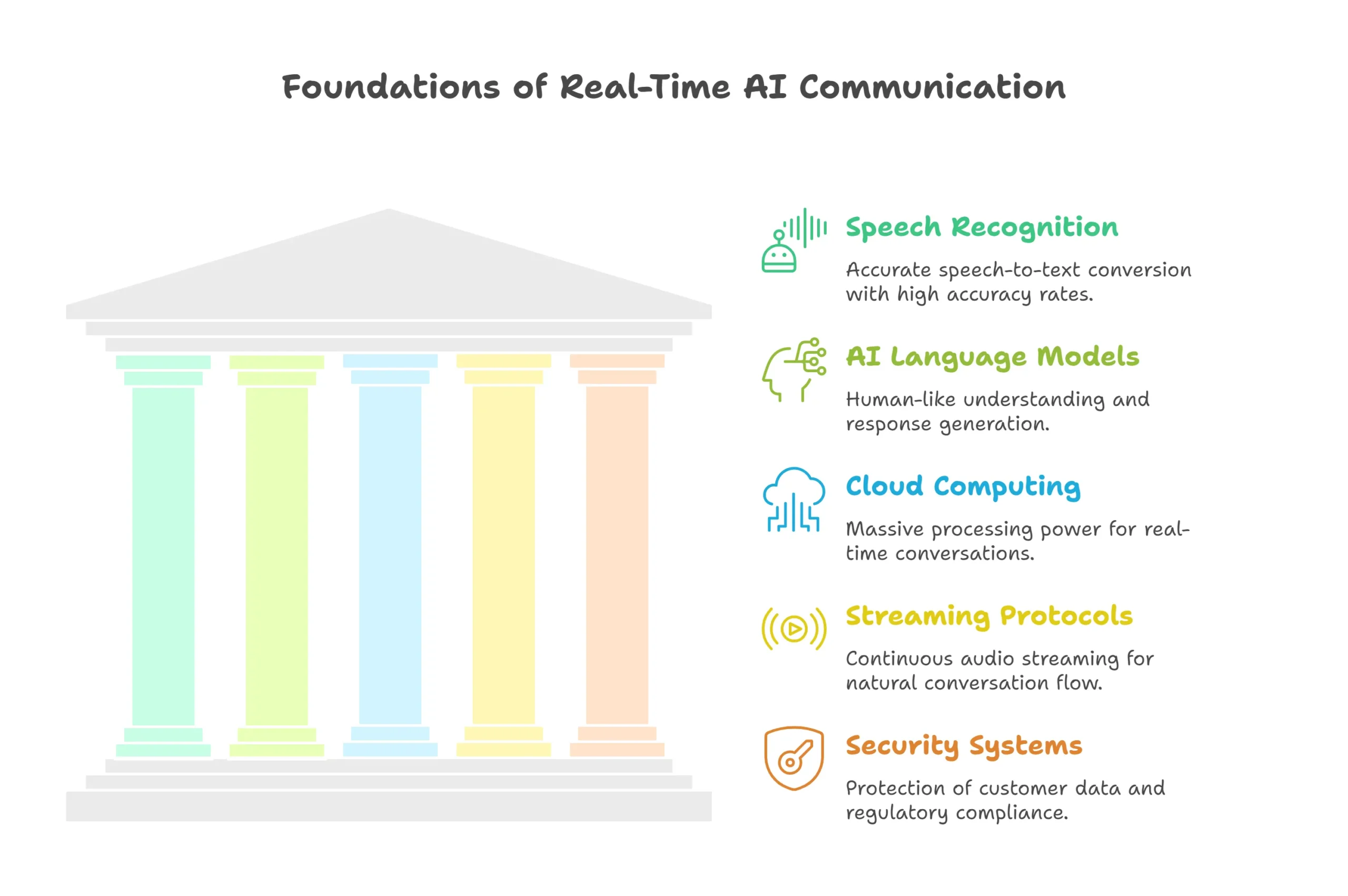

Several core technologies work together seamlessly:

Speech Recognition: Google Speech-to-Text, OpenAI Whisper, Deepgram, and Amazon Transcribe provide accurate speech-to-text with over 95% accuracy.

AI Language Models: GPT-4, Claude, Gemini, and specialized models handle understanding and response generation with human-like intelligence.

Cloud Computing: AWS, Google Cloud, and Microsoft Azure provide massive processing power needed for real-time conversations at scale.

Streaming Protocols: WebRTC, SIP trunking, and telephony APIs connect calls and stream audio continuously for natural flow.

Security Systems: Encryption, authentication, and compliance frameworks protect customer data and meet regulatory requirements.

Real-World Use Cases of Voice AI Agents

Customer Support & Call Centers

Voice AI handles common questions 24/7. Customers get instant answers about account status, order tracking, returns, and basic troubleshooting.

No more hold music. No more “your call is important to us” while waiting 20 minutes. Instant service anytime someone calls.

Appointment Booking & Scheduling

Medical offices, salons, dental practices, and service businesses use voice AI for scheduling. The agent checks availability, books appointments, sends confirmations, and handles changes.

This eliminates phone tag completely. Customers book instantly whenever they call, even at midnight.

Sales & Lead Qualification

Voice AI makes outbound calls to qualify leads. It asks qualifying questions, answers product inquiries, and schedules demos with your sales team.

Every lead gets contacted immediately. No more leads going cold because your team was busy.

Banking & Finance

Banks use voice AI for balance inquiries, transaction history, fraud alerts, and payment processing.

Customers get instant service without waiting. Banks dramatically reduce call center costs while improving satisfaction.

E-Commerce & Retail

Online stores deploy voice agents for order tracking, return processing, product questions, and store information.

During holiday rushes, voice AI scales infinitely without additional staffing costs.

Industries Using Voice AI Agents Today

- Healthcare: Appointment scheduling, prescription refills, test results, insurance verification

- Real Estate: Property inquiries, showing appointments, buyer qualification, follow-ups

- Insurance: Claims filing, policy questions, quote generation, renewal reminders

- Travel: Reservations, booking changes, customer service, check-in assistance

- SaaS Companies: Customer onboarding, technical support, account management, renewals

How to Build and Implement a Voice AI Agent

1. Define Your Business Goal

Start with clarity. What problem are you solving? Reducing wait times? Scaling without hiring? Improving lead response speed?

Set measurable goals—call resolution rate, customer satisfaction score, cost per call, or sales conversion percentage.

2. Choose the Right Voice AI Platform

Evaluate platforms on these factors:

- Ease of use: Can non-technical staff manage it?

- Integrations: Does it connect to your CRM, calendar, helpdesk?

- Languages: Do you need multilingual support?

- Customization: How much control do you need over conversations?

- Pricing model: Per-minute, per-call, or subscription?

Popular platforms include Vapi, Bland AI, Retell AI, ElevenLabs, and enterprise solutions like Amazon Connect or Google Contact Center AI.

3. Design Conversation Flows

Map common conversation scenarios:

- Opening greetings and identification

- Questions to understand customer needs

- Information gathering sequences

- Error recovery paths

- When to transfer to humans

- Closing statements

Test these flows with real users before launching broadly.

4. Integrate CRM, Calendar, or Databases

Connect your voice agent to business systems so it can:

- Look up customer accounts

- Check product availability or appointment slots

- Create support tickets

- Schedule appointments automatically

- Process payments securely

- Update records in real-time

API integrations transform your agent from information provider to action-taker.

5. Test with Real Users

Run a pilot program with limited users. Monitor for:

- Speech recognition errors

- Misunderstood requests

- Conversation dead ends

- Customer frustration points

Iterate based on feedback before full deployment.

6. Deploy and Scale

Start with low-risk scenarios—FAQs, after-hours support, or simple scheduling. Expand to complex conversations as you gain confidence.

Track performance continuously.

7. Monitor, Analyze, and Optimize

Watch key metrics:

- Call completion rates

- Average handle time

- Customer satisfaction scores

- Transfer-to-human rates

- Cost per interaction

Use data to identify improvements. Retrain your models on actual calls to boost accuracy continuously.

Cost of Voice AI Agents: What to Expect

Voice AI pricing varies by provider and usage:

Per-Minute: $0.05 to $0.25 per conversation minute. Simple use cases cost less; complex scenarios cost more.

Per-Call: $0.50 to $5 per call depending on length and complexity.

Monthly Subscription: $199 to $2,000+ monthly for unlimited calls within usage limits.

Cost Factors:

- Call volume and average duration

- Number of system integrations

- Custom voice development

- Language and accent support

- Support level and SLA requirements

Return on Investment: Most businesses see 200-400% ROI within six months through labor savings, increased capacity, and improved conversion rates.

A typical call center agent costs $35,000-45,000 annually. One voice AI subscription can replace multiple agents while working 24/7.

Legal, Privacy & Compliance Considerations

Call Recording Laws: Many places require telling people before recording. Voice AI systems that save conversations must notify callers and get consent.

AI Disclosure: Some states and countries require businesses to tell customers when they’re talking to AI instead of humans. Your agent should identify itself at the start.

Data Compliance Requirements:

- GDPR for European customer data

- HIPAA for healthcare information

- PCI DSS for payment card data

- CCPA for California residents

Choose platforms with proper certifications for your industry. Security matters when handling customer conversations.

Voice AI Agents vs Chatbots: Key Differences

| Feature | Voice AI Agents | Chatbots |

| Communication | Spoken conversation | Text typing |

| Channel | Phone calls, voice apps | Websites, messaging apps |

| Complexity | High—handles speech variations | Medium—processes standardized text |

| Speed | Instant real-time | Can be delayed |

| Best Use | Customer calls, sales, scheduling | Website support, FAQs |

| Accessibility | Great for multitasking, elderly | Requires reading and typing |

| User Preference | Feels personal and immediate | Convenient but less engaging |

Technology Comparison: Architecture Types

| Architecture Type | Response Speed | Flexibility | Complexity | Best For |

| Cascading (Pipeline) | Moderate (800ms-1.5s) | High—easy to swap components | Medium | Businesses needing customization |

| End-to-End | Fast (400-700ms) | Low—unified system | High | Latency-critical applications |

| Hybrid | Fast (500-900ms) | High—combines benefits | Medium-High | Enterprise deployments |

Future of Voice AI Agents

Emotion Detection: Next-generation systems will hear frustration, happiness, or confusion in your tone and respond appropriately. Upset customers will trigger empathetic responses or automatic human escalation.

Real-Time Translation: Voice agents will conduct conversations in multiple languages simultaneously. A Spanish speaker could call and receive responses in Spanish even if the agent is primarily English-based.

Human-AI Collaboration: The future combines strengths. Voice AI handles routine tasks while seamlessly transferring complex or emotional situations to human agents.

Complete Workflow Automation: Voice agents will trigger entire business processes. A customer call could automatically generate quotes, schedule deliveries, process payments, and send confirmations without human involvement.

Frequently Asked Questions

How do Voice AI Agents work in real time?

Voice AI agents use streaming technology to process conversations instantly. As you speak, your audio is transcribed to text, understood, processed by AI, and converted to speech within 500-800 milliseconds. Streaming sends audio in small chunks rather than complete sentences, eliminating noticeable delays and creating natural conversation flow.

Are AI voice calls legal?

Yes, AI voice calls are legal when used properly. However, you must disclose that customers are speaking with AI, not humans. You must also comply with call recording laws that vary by location—some places require two-party consent. Follow data protection regulations like GDPR and HIPAA. Reputable voice AI platforms include compliance features built-in.

How accurate are Voice AI Agents?

Modern voice AI achieves 85-95% accuracy for clear audio and structured conversations. Accuracy depends on audio quality, background noise, accents in training data, and conversation complexity. Systems improve continuously as they learn from real conversations. For critical interactions, hybrid systems combining AI with human oversight deliver the highest accuracy.

Do I need developers to build a Voice AI Agent?

Not necessarily. No-code platforms let non-technical users build functional voice agents using visual interfaces and templates. However, complex needs—custom integrations, specialized security, or unique conversation logic—benefit from developer expertise. Many businesses start with no-code solutions and add custom development as requirements grow.

How much does a Voice AI Agent cost?

Voice AI costs range from $0.05 to $0.25 per minute or $199 to $2,000+ monthly subscriptions. Small businesses start around $200-500 monthly with pay-as-you-go pricing. Enterprise solutions with advanced features cost $2,000-10,000+ monthly. Most businesses achieve over 200% ROI within six months through reduced labor costs and increased capacity.

Transform Your Business with Voice AI Today

Voice AI agents are practical business tools delivering measurable results right now. Companies using them report 60% cost reductions, true 24/7 availability, and dramatically improved customer satisfaction.

Whether you handle hundreds of calls daily or want to scale without proportional hiring, voice AI offers a proven solution.

Perfect for:

- Call centers overwhelmed with volume

- Service businesses with scheduling bottlenecks

- Sales teams losing leads to slow response

- E-commerce needing round-the-clock support

- Healthcare managing patient communications

- Any business where phone calls drive revenue

The technology works. The platforms are accessible. The ROI is clear.

The question isn’t whether to adopt voice AI—it’s how quickly you can implement it to gain competitive advantage.

Start today. Choose a platform, design your first conversation flow, and experience how voice AI transforms customer conversations from expensive overhead into profit-generating assets.