Healthcare organizations are racing to deploy voice AI in healthcare, but one misstep could cost millions in fines and destroy patient trust.

If you’re implementing AI voice agents for patient interactions, appointment scheduling, or medical documentation, you need ironclad HIPAA compliance not just good security practices.

This guide shows you exactly how to deploy voice AI technology that protects ePHI security while automating your healthcare workflows.

What You’ll Learn:

- Why standard AI voice platforms aren’t HIPAA compliant (and what you need instead)

- The three technical pillars that make voice AI legally defensible

- How to choose vendors that will actually sign a BAA for AI vendors

- Real-world use cases that balance automation with healthcare automation compliance

- A practical checklist for evaluating AI voice partners in 2025

The Rise of Voice AI in Healthcare: Opportunity Meets Risk

Healthcare providers face an administrative crisis. Physicians spend nearly two hours on documentation for every hour of patient care. Meanwhile, front desk teams drown in appointment calls, insurance verifications, and patient follow-ups.

Voice AI in healthcare promises relief. AI voice agents can handle appointment scheduling, conduct symptom triage, perform post-discharge check-ins, and even serve as real-time medical scribes during patient encounters.

But here’s the catch: deploying “standard” consumer-grade voice AI in healthcare settings is illegal.

Every patient conversation contains Protected Health Information. Every voice recording creates ePHI that must be secured. Every AI interaction requires strict identity verification, encryption standards, and audit trails that consumer AI platforms simply don’t provide.

The financial stakes are staggering. HIPAA violations carry penalties up to $1.9 million per violation category, per year. A single data breach affecting 500+ patients triggers mandatory federal reporting and often results in class-action lawsuits.

Beyond money, there’s trust. Patients sharing symptoms, medication struggles, or mental health concerns deserve absolute confidence that their voice data won’t be leaked, sold, or accessed by unauthorized parties.

This guide provides the mandatory framework for deploying AI voice agents that meet 2025 HIPAA compliance standards. Whether you’re a hospital system, private practice, or healthcare technology vendor, you’ll learn exactly what’s required to implement voice AI technology legally and safely.

Understanding the Foundation: HIPAA and AI Voice Technology

Before diving into technical requirements, you need to understand how HIPAA law applies to voice-based artificial intelligence.

The Definition of PHI in Audio Conversations

Protected Health Information isn’t just what’s written in medical charts. Under HIPAA, PHI includes any individually identifiable health information transmitted or maintained in any form or medium.

For voice AI in healthcare, this means:

Voice recordings containing patient names, medical conditions, treatment plans, or appointment details are PHI. Even if the AI deletes the recording after transcription, the audio existed as ePHI and must be secured during processing.

Transcripts generated from patient conversations are electronic PHI. Whether stored permanently or processed transiently, these text records fall under HIPAA security rules.

Voiceprints used for biometric authentication are considered PHI. Unlike passwords, voiceprints are biometric identifiers permanently linked to an individual’s identity.

Metadata from calls timestamps, phone numbers, call duration becomes PHI when linked to healthcare services or medical discussions.

This comprehensive definition means you can’t treat voice AI like a consumer chatbot. Every component of the voice interaction pipeline requires HIPAA-grade protection.

Covered Entities Versus Business Associates

HIPAA compliance depends on understanding your role in the data chain.

Covered entities are healthcare providers, health plans, and healthcare clearinghouses that directly handle PHI. If you’re a hospital, clinic, physician practice, or health system, you’re a covered entity with direct HIPAA obligations.

Business associates are vendors that handle PHI on behalf of covered entities. AI voice platform providers, cloud hosting companies, transcription services, and software vendors become business associates when they process healthcare data.

This distinction matters because both parties have legal obligations, but the covered entity bears ultimate responsibility for ensuring compliance across all vendors.

The Crucial Business Associate Agreement

Here’s the non-negotiable requirement: you cannot use any AI tool that accesses PHI without a signed Business Associate Agreement.

A BAA for AI vendors is a legal contract where the vendor agrees to:

- Implement appropriate safeguards to protect ePHI

- Report any security incidents or breaches

- Ensure subcontractors also sign BAAs

- Allow the covered entity to audit compliance

- Return or destroy PHI when the contract ends

Even if a vendor has excellent security practices, using their platform without a signed BAA is a HIPAA violation. The BAA establishes legal accountability and defines what happens when something goes wrong.

Many popular AI platforms including some big-name consumer voice assistants explicitly refuse to sign BAAs because they don’t want HIPAA liability. This immediately disqualifies them from healthcare use, regardless of their capabilities.

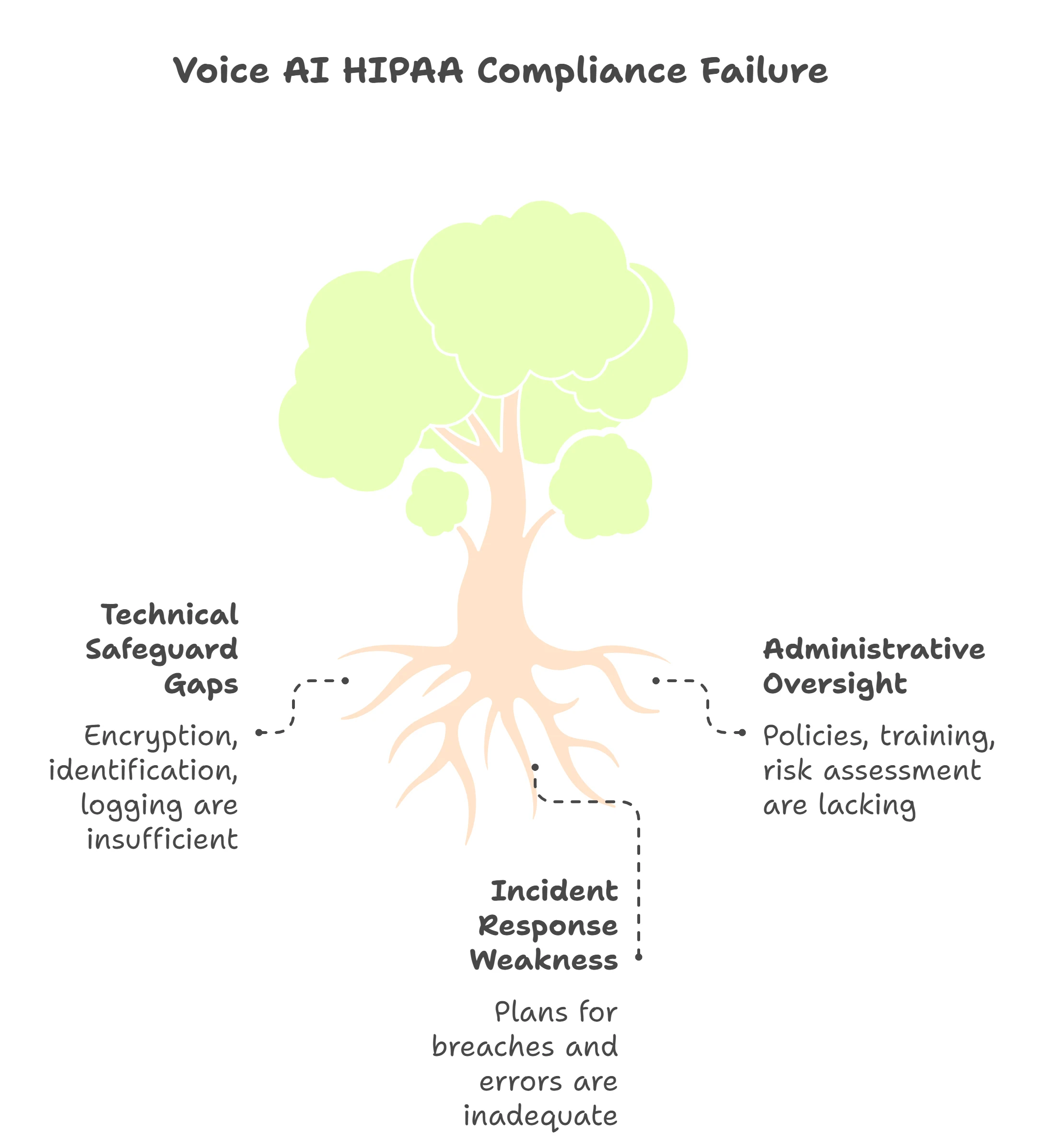

The Three Pillars of HIPAA Security for Voice AI

HIPAA’s Security Rule organizes requirements into three categories. Voice AI implementations must address all three to achieve compliance.

Pillar One: Technical Safeguards

These are the technology controls that protect ePHI security during collection, processing, storage, and transmission.

End-to-end encryption is mandatory. Data must be encrypted both in transit and at rest. For voice AI, this means:

- AES-256 encryption for any stored audio files or transcripts

- TLS 1.3 (or newer) for all data transmission between devices, servers, and APIs

- Encrypted connections for real-time voice streaming during patient calls

Zero-retention mode is the gold standard for voice AI voice data encryption. The safest PHI is PHI that doesn’t exist. Leading compliant platforms process voice transiently converting speech to text, executing the AI’s function, then immediately discarding the audio without storage. While transcripts may be saved for medical records, the raw voice data never persists.

Unique user identification ensures every person and system accessing PHI can be tracked. For voice agents, this means:

- Staff members have individual login credentials (no shared accounts)

- The AI system logs which administrator configured each voice agent

- Patient identity verification before any medical discussion occurs

Automatic log-off and session timeouts prevent unauthorized access when users step away from terminals or when calls are abandoned. Voice AI platforms should automatically terminate sessions after defined inactivity periods.

Audit controls must track every PHI access. Compliant voice AI platforms generate detailed logs showing:

- When each call occurred

- Which patient was involved (using de-identified patient IDs)

- What information was accessed or modified

- Which staff member reviewed call transcripts

- Any system errors or security events

Pillar Two: Administrative Safeguards

Technology alone doesn’t ensure compliance. You need policies, training, and processes governing how humans interact with voice AI systems.

Risk assessments must be performed regularly. Before deploying voice AI in healthcare, conduct a thorough analysis identifying:

- What types of PHI the system will handle

- Where data vulnerabilities exist in the voice processing pipeline

- What happens if the AI misunderstands a patient or provides incorrect information

- How you’ll detect unauthorized access or data breaches

Update these assessments annually and whenever you change AI vendors or add new voice capabilities.

Workforce training ensures staff understand their compliance obligations. Every employee interacting with voice AI technology needs education on:

- What constitutes PHI in voice conversations

- How to verify patient identity before initiating AI-assisted calls

- When to escalate from AI to human oversight

- How to report suspected security incidents

Incident response plans define your action steps when problems occur. What happens if:

- The AI “hallucinates” and provides medically incorrect information to a patient?

- A voice recording is accidentally sent to the wrong patient portal?

- A cyberattack targets your voice AI vendor’s servers?

- An employee loses a device with access to voice AI call transcripts?

Your incident response plan should include notification procedures, containment steps, investigation protocols, and remediation processes.

Access controls limit PHI exposure to the minimum necessary. Not every staff member should access all voice AI functions. Implement role-based permissions so:

- Front desk staff can use scheduling bots but can’t access clinical triage recordings

- Physicians can review scribe transcripts from their own patients only

- IT administrators can configure systems without viewing patient conversations

Pillar Three: Physical Safeguards

These requirements address the physical security of systems, devices, and facilities where ePHI exists.

Data residency matters for voice AI voice data encryption. Many healthcare organizations prefer U.S.-based servers to ensure data remains under U.S. legal jurisdiction. While HIPAA doesn’t explicitly require domestic data storage, international data transfers introduce additional compliance complexity.

Cloud security requires careful vendor vetting. Major platforms like AWS and Microsoft Azure offer “HIPAA-eligible” environments with appropriate security controls. However, simply using these clouds doesn’t make you compliant you must:

- Select the correct service configurations (many cloud features aren’t HIPAA-eligible)

- Sign BAAs with the cloud provider

- Enable appropriate access logging and encryption

- Restrict physical access to servers through vendor-managed controls

Workstation security protects devices used to access voice AI systems. Any computer, tablet, or phone that administrators use to configure voice agents or review call transcripts must have:

- Password protection or biometric authentication

- Automatic screen locks

- Encryption for local storage

- Antivirus and security update policies

Device and media disposal procedures ensure PHI doesn’t leak through discarded equipment. When retiring servers, computers, or storage devices that touched voice AI data, you must securely wipe or physically destroy all media containing ePHI.

Critical Challenges Unique to Voice AI

Voice-based artificial intelligence introduces compliance challenges that don’t exist with traditional text-based systems.

The Ambient Capture Problem

Voice AI can be too good at listening. When deploying AI voice agents in clinical settings like medical scribes that record doctor-patient conversations there’s risk of capturing unauthorized discussions.

Imagine a physician wearing a voice-recording device that’s supposed to document patient encounters. The doctor forgets to turn it off during lunch, and the device records conversations about other patients in the break room. That’s a HIPAA violation.

Solutions include:

- Clear visual indicators showing when recording is active

- Automatic recording cutoffs after specified time periods

- Staff training on when voice AI should be enabled

- Patient consent protocols before voice documentation begins

The Voiceprint Permanence Issue

Voice biometrics offer convenient patient authentication the AI recognizes who you are by your voice pattern. But voiceprints present unique security risks.

If a password is stolen, you change it. If your voiceprint is compromised, you can’t change your voice. Voiceprint data must be protected with the highest security standards because a breach creates permanent vulnerability.

Healthcare automation compliance best practices for voiceprints include:

- Storing voiceprints as irreversible mathematical representations, not raw audio

- Using voiceprints alongside other authentication factors (multi-factor authentication)

- Allowing patients to opt for alternative verification methods

- Implementing strict access controls for systems storing voiceprint data

The Identity Verification Challenge

Before discussing any medical information, AI voice agents must verify they’re speaking with the correct patient. This is harder than it sounds.

Bad verification: “Please say your name and date of birth.” An eavesdropper or family member could easily provide this information.

Better verification: Multi-factor approaches combining:

- Information the patient knows (date of birth, last four SSN digits)

- Something the patient has (calling from registered phone number)

- Who the patient is (optional voiceprint authentication)

The AI must be programmed to gracefully decline providing medical information if identity can’t be confirmed, and escalate to human verification when needed.

Use Cases for Compliant Voice Agents

Understanding the requirements is one thing. Here’s how healthcare organizations actually deploy compliant voice AI technology.

Automated Appointment Scheduling

AI voice agents handle routine scheduling without human staff involvement. Patients call a practice number, and the voice agent:

- Verifies patient identity using registration information

- Checks real-time provider availability

- Books appointments based on patient preferences

- Sends confirmation via text or email

- Handles rescheduling and cancellations

Compliance considerations: The scheduling bot accesses appointment times (PHI) and must verify identity before discussing existing appointments or medical reasons for visits.

Post-Discharge Follow-Ups

After hospital discharge or surgery, voice AI in healthcare conducts wellness checks by calling patients at scheduled intervals. The AI asks:

- How’s your pain level today on a scale of one to ten?

- Are you taking medications as prescribed?

- Have you experienced any complications like fever or unusual swelling?

- Do you need to schedule a follow-up appointment?

Responses are logged in the EHR, with alerts flagging concerning answers for immediate clinical review.

Compliance considerations: These calls generate new PHI (patient-reported symptoms) that must be encrypted and properly documented. The AI needs sophisticated natural language processing to recognize medical urgencies requiring human escalation.

Intake and Triage

Before a doctor joins a telemedicine appointment, an AI voice agent conducts preliminary intake:

- Collects current symptoms and medical history

- Asks screening questions about allergies and current medications

- Assesses urgency to prioritize scheduling

- Generates a pre-visit summary for the clinician

This saves physician time while ensuring comprehensive information capture.

Compliance considerations: Triage AI must never provide medical diagnosis or treatment advice that’s practicing medicine without a license. The voice agent should clearly identify itself as an AI gathering information for a human clinician’s review.

Real-Time Medical Scribes

During clinical encounters, AI voice agents listen to doctor-patient conversations and generate clinical notes in real time. The physician reviews and signs off on the AI-generated documentation.

This dramatically reduces after-hours charting burden while potentially improving documentation quality through consistent formatting and comprehensive capture.

Compliance considerations: Patients must provide informed consent for AI recording. The ambient listening must be controllable (start/stop functions) to prevent capturing private conversations. Generated notes require physician review before becoming part of the legal medical record.

Checklist: Choosing a HIPAA-Compliant AI Voice Partner

Not all voice AI vendors are created equal. Use this checklist when evaluating platforms for healthcare automation compliance.

Will they sign a Business Associate Agreement? This is non-negotiable. If a vendor won’t sign a BAA for AI vendors, they’re not suitable for healthcare use, regardless of other features. Request to review their standard BAA before starting evaluation trials.

Does the platform offer zero-retention options? The ability to process voice transiently without storing raw audio files dramatically reduces your risk surface. Ask vendors specifically about their data retention policies and whether you can configure true zero-retention modes.

Are they SOC 2 Type II certified? SOC 2 Type II certification demonstrates that an independent auditor verified the vendor’s security controls over time. While not HIPAA-specific, this certification indicates serious security maturity and provides assurance that the vendor operates secure systems.

Do they provide audit logs for every call? You need comprehensive tracking of all PHI access. Confirm the platform generates tamper-proof logs showing call timestamps, participants, data accessed, and any system errors. You should be able to export these logs for your own compliance documentation.

Is there a human-in-the-loop escalation path? AI isn’t perfect. Compliant systems recognize when patient questions exceed the AI’s scope and seamlessly transfer to human staff. Test the vendor’s escalation triggers and ensure patients can always request human assistance.

Where is data stored and processed? Understand the vendor’s server locations, cloud infrastructure, and any third-party subprocessors. Verify that all components in the data processing chain are HIPAA-compliant and covered by appropriate BAAs.

What encryption standards do they use? Confirm they implement AES-256 for data at rest and TLS 1.3 for data in transit. Ask about their encryption key management practices who controls the keys, how often are they rotated, and what happens if keys are compromised.

How do they handle AI model training? Some AI platforms improve their models using customer data. For healthcare, this is typically unacceptable. Clarify whether your patient voice data will be used to train AI models, and ensure your BAA prohibits this unless you explicitly consent with appropriate de-identification.

What’s their incident response track record? Ask about past security incidents and how they were handled. A vendor that’s never had a security incident either hasn’t been tested or isn’t being transparent. You want a partner with proven incident response capabilities.

Can you review their security documentation? Request access to their HIPAA compliance documentation, security policies, and penetration testing results. Reputable vendors maintain detailed compliance documentation and willingly share it with serious prospects under NDA.

Comparing Standard Voice AI vs. HIPAA-Compliant Voice AI

Understanding the difference between consumer-grade and healthcare-grade voice technology helps justify the investment in proper compliance.

| Feature | Standard Voice AI | HIPAA-Compliant Voice AI |

| Business Associate Agreement | Not available | Required and signed before deployment |

| Data Encryption | Basic HTTPS, varies by vendor | AES-256 at rest, TLS 1.3 in transit, enforced |

| Data Retention | Often indefinite for model training | Zero-retention options, configurable policies |

| Access Controls | Simple user accounts | Role-based access, unique IDs, audit trails |

| Patient Identity Verification | Minimal or none | Multi-factor verification before PHI discussion |

| Audit Logging | Basic usage metrics | Comprehensive PHI access logs, tamper-proof |

| Incident Response | Consumer support timelines | Contractual breach notification requirements |

| Subprocessor Management | Undisclosed | All subprocessors disclosed with BAAs |

| Cost | Low or free | Premium pricing reflecting compliance overhead |

| Legal Liability | No healthcare responsibility | Shared liability through BAA |

This table illustrates why free or cheap consumer voice AI platforms are never appropriate for healthcare settings, even for seemingly innocuous tasks like appointment scheduling. The compliance requirements fundamentally change how voice AI systems must be architected, operated, and managed.

Key Requirements Summary: Technical Implementation

For healthcare IT teams implementing voice AI technology, here are the core technical requirements organized by priority.

| Requirement Category | Must-Have Components | Implementation Priority |

| Data Protection | AES-256 encryption at rest, TLS 1.3 in transit, encrypted backup systems | Critical – Deploy before production |

| Access Management | Unique user IDs, role-based permissions, multi-factor authentication, automatic session timeouts | Critical – Deploy before production |

| Audit & Monitoring | Comprehensive access logs, real-time alerting, tamper-proof log storage, 6+ year retention | Critical – Deploy before production |

| Identity Verification | Multi-factor patient authentication, registered device recognition, escalation to human verification | Critical – Required for any PHI discussion |

| Data Lifecycle | Zero-retention options, automated data deletion, secure disposal procedures | High – Configure during initial setup |

| Incident Response | Breach notification procedures, system isolation capabilities, forensic logging | High – Establish before production |

| Business Continuity | Backup voice AI systems, failover to human staff, data recovery procedures | Medium – Implement within 90 days |

| Vendor Management | Signed BAAs, subprocessor disclosure, security attestations, compliance documentation | Critical – Complete before vendor selection |

The Future of Compliant Voice Technology

As we move deeper into 2025, voice AI in healthcare is evolving toward even stronger privacy protections.

Edge AI processing represents the next frontier. Instead of streaming patient voices to cloud servers, edge AI processes speech directly on local devices smartphones, tablets, or on-premise servers. The voice never leaves the healthcare facility, dramatically reducing exposure.

Major tech platforms are developing healthcare-specific voice chips that perform AI inference locally while maintaining HIPAA-grade security. This approach combines the convenience of voice interaction with the security of on-device processing.

Federated learning allows AI models to improve without centralizing patient data. Instead of sending voice recordings to vendors for model training, the AI learns from patterns across multiple healthcare organizations without any individual patient data leaving its home institution.

Differential privacy techniques add mathematical noise to voice data, making it impossible to identify individuals while preserving the medical information needed for AI functionality. As these methods mature, they’ll enable safer data sharing for research and model improvement.

Blockchain-based audit trails are emerging to create immutable records of all PHI access. Every time the voice AI accesses patient information, that transaction is recorded in a blockchain that cannot be altered retroactively, providing absolute transparency for compliance auditing.

The healthcare industry is also pushing for standardized certification programs specifically for AI voice platforms. Rather than each healthcare organization individually vetting vendors, standardized certification similar to HITRUST for general health IT would streamline vendor selection and provide consistent security baselines.

Final Thoughts: Compliance Enables Innovation

Some healthcare leaders view HIPAA compliance as a burden that slows innovation. This perspective is backwards.

Compliance isn’t a barrier to voice AI adoption it’s the foundation that makes adoption possible. Patients will only trust voice AI in healthcare if they know their medical conversations are protected with the same rigor as their written medical records.

Healthcare organizations that prioritize healthcare automation compliance from day one gain multiple advantages. They avoid the costly retrofitting required when non-compliant systems must be rebuilt. They prevent the reputation damage and legal exposure of data breaches. Most importantly, they earn patient trust that enables broader AI adoption.

The voice AI revolution in healthcare is inevitable. Administrative tasks that consume hours of staff time will be automated. Patients will schedule appointments, refill prescriptions, and receive care coordination through natural voice conversations. Physicians will spend more time with patients and less time on documentation.

But this future only arrives safely when built on a foundation of robust HIPAA compliance for AI voice agents. The technical requirements, administrative policies, and vendor partnerships outlined in this guide aren’t optional extras they’re the essential infrastructure that protects patients while unlocking AI’s potential.

As you evaluate voice AI platforms and plan your implementation strategy, remember that cutting corners on compliance creates technical debt that compounds over time. Invest in proper ePHI security, AI voice data encryption, and comprehensive BAA for AI vendors from the beginning.

The healthcare organizations that get voice AI compliance right in 2025 won’t just avoid penalties they’ll gain competitive advantage through faster, safer AI deployment that sets the standard for the industry.

Patient trust is earned through demonstrated commitment to privacy. Voice AI technology that respects that principle doesn’t just meet regulatory requirements it honors the fundamental obligation healthcare providers have to protect the people they serve.